AI Infrastructure and Its Implications for the Power Grid

Technion Researchers Present an Energy Framework for the Age of Artificial Intelligence

AI Infrastructure and Its Implications for the Power Grid: Technion Researchers Present an Energy Framework for the Age of Artificial Intelligence

In recent years, we have witnessed a dramatic surge in the use of artificial intelligence across a wide range of fields and sectors. It is becoming clear that the development of large language models (LLMs) and models such as GPT poses an unprecedented challenge to the energy sector.

In a paper published recently in Energies, Technion-Israel Institute of Technology researchers present an extensive review of the challenges of energy integration, primarily from the perspective of the power grid. In their paper, the researchers provide a comprehensive overview of the energy implications of the massive data centers that train AI systems and propose a framework for successfully addressing these challenges. The study was led by Professor Yoash Levron and doctoral student Elinor Ginzburg-Ganz from the Andrew and Erna Viterbi Faculty of Electrical and Computer Engineering at the Technion, in collaboration with researchers from Tallinn University in Estonia.

Over the past five years, the number of data centers worldwide has nearly tripled, and today there are approximately 1,100 such centers in operation. These facilities perform extensive and complex computing tasks, including training on massive datasets with synchronization across hundreds of thousands of processors, including advanced graphics processing units (GPUs). This rapid development has been enabled by accelerated advances in both hardware and software; however, as noted, it places an enormous burden on power grids.

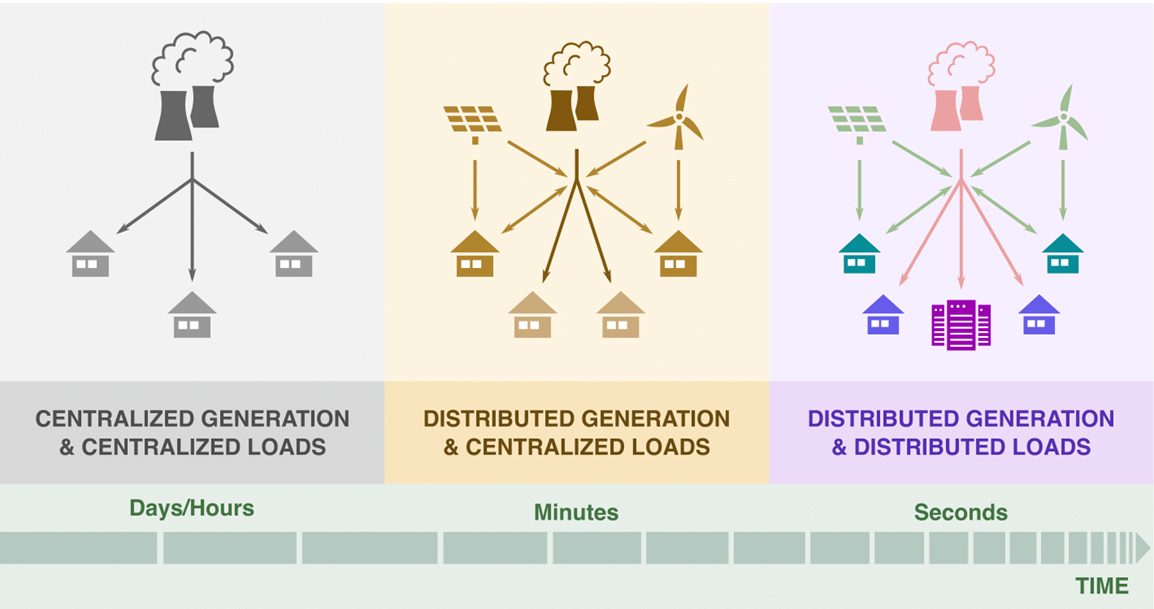

AI centers are characterized not only by extremely high electricity consumption but also by rapid fluctuations in load. This phenomenon threatens the stability of power grids and necessitates technological, operational, and other adaptations. According to estimates, within just five years, global demand from AI centers will double to approximately 122 gigawatts, driven in part by megaprojects such as the Stargate supercomputer, which alone is expected to consume 5 gigawatts.

Technion researchers warn that the challenges go beyond increasing capacity: this is a fundamental change in the nature of electricity consumption, involving geographic distribution, rapid fluctuations, voltage sensitivity, and significant environmental impacts. Supply failures – such as the one that recently occurred at the American energy company Dominion Energy – could not only directly harm AI centers but also damage the generators intended to supply them with power in the event of a grid failure.

The researchers recommend formulating a comprehensive strategy that includes precise characterization of the unique electricity requirements of AI environments, identification of bottlenecks and other failure factors, development of an adapted and coordinated economic policy, and thorough, practical consideration of environmental issues.

This research did not receive any external financial support.

https://www.mdpi.com/1996-1073/19/1/137